AI Tutor: Scaling support without scaling human effort

How AI improved the learning experience and reduced churn

Company: Hotmart

Role: Senior Product Designer

Scope: End-to-end

Team: Support

Timeline: 60 days

context

Hotmart is a global digital products platform that connects content creators with millions of students through online courses, memberships, and educational content.

In this model, creators can easily scale distribution and revenue — but student support remains dependent on manual effort.

As the user base grows, answering questions individually becomes unfeasible. At the same time, students expect fast responses within the learning experience itself.

This creates a gap between content scalability and support scalability.

Students

Unanswered questions

No feedback on comments

Frustration leads to drop-off

Creators

Support demand doesn’t scale

Repetitive questions

Lack of visibility into real student struggles

→ Support does not keep up with audience growth

How might we scale student support without proportionally increasing creator effort, while maintaining quality, context, and response speed?

Hypotheses

If we provide contextual and immediate AI-powered support within the learning experience, we can scale student support without increasing creator effort, improving engagement and retention.

In-context support increases usage

If support is embedded within the lesson (instead of external channels), more students will use it.

Expected impact:

↑ Feature adoption

↑ Interactions per student

Immediate responses reduce drop-off

If students receive answers at the moment of doubt, they will experience less frustration and lower drop-off rates.

Expected impact:

↑ Course completion rate

↓ In-course churn

AI resolves repetitive questions

If AI is trained on course content, it can handle most recurring questions without human intervention.

Expected impact:

↓ Support ticket volume

↓ Time spent on support

Feedback improves AI quality

If users can rate responses, the system will continuously learn and improve.

Expected impact:

↑ Positive response rate

↓ Repeated questions

Heuristics Adopted

To guide product decisions, we defined key principles for the experience.

✸

In-context support

Help should happen within the lesson, at the moment of doubt.

→ Prevents flow disruption and increases usage likelihood

✸

AI as an assistant with transparency

AI supports, while the creator remains the source of truth, and users understand who they are interacting with.

→ Builds trust, increases adoption, and prevents expectation mismatch

✸

Speed over perfection

Fast responses are more valuable than perfect ones.

→ Reduces frustration and keeps students engaged

✸

Continuous learning through feedback

The system evolves based on user behavior and feedback.

→ Improves response quality over time

✸

Scalability by design

The solution must work for both small and large audiences.

→ Avoids proportional growth in operational cost

✸

Reduced cognitive effort

Interactions should be simple, direct, and predictable.

→ Encourages repeated use throughout the learning journey

discovery

To deeply understand the problem, we combined strategic alignment, qualitative research, and behavioral analysis.

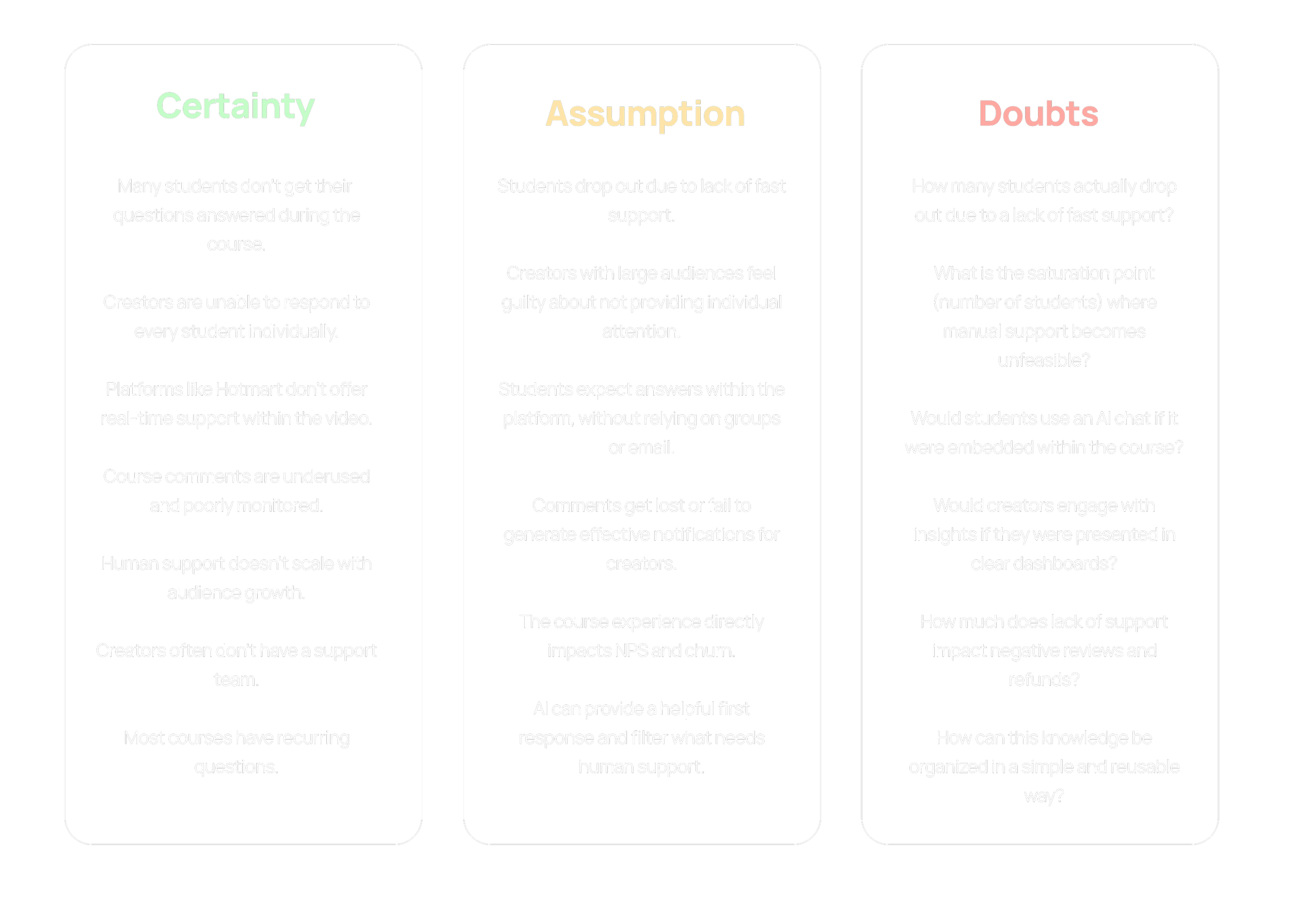

CSD

Stakeholder alignment

We started by aligning with product, business, and engineering teams to map initial perceptions, constraints, and goals.

This step helped consolidate the problem from multiple perspectives and ensured a shared direction from the beginning.

CSD

We organized early knowledge using the CSD framework to structure what we knew and identify gaps.

Certainties

Support does not scale with user growth.

Assumptions

AI could absorb recurring questions.

Doubts

What is the real impact on engagement and student behavior?

The CSD directly guided the focus of our research.

INTERVIEWS

Qualitative research

We designed interview scripts for students and creators focusing on:

Behavior during the course

Experience with questions and support

Expected response time

Openness to AI-powered support

Interviews

We conducted interviews with two key groups:

Students

To understand frustrations and moments of abandonment during the learning journey.

Creators

To map operational load and scalability limitations.

This revealed the problem from both sides of the ecosystem.

OUTCOMES

Creators with large audiences are unable to keep up with all questions.

→ Lack of time is the main constraint.

They notice drops in engagement and NPS, but are unable to measure this impact directly.

→ Negative impact without metrics.

✸ INSIGHT: Centralization tool + analytics + AI

Provide simple feedback options (👍👎 or “was this helpful?”).

→ Interesse em IA

Students report dropping out before reaching the halfway point, even when they like the content, due to unresolved questions.

→ Expectations and frustrations

✸ INSIGHT: Ensure continuous feedback and offer multiple response formats

Most students have questions throughout the course, especially in the early modules.

→ In-course behavior

Comments within lessons are perceived as a “black box”: there are no responses, notifications, or feedback.

→ Support channels

✸ INSIGHT: Prioritize immediate support, ideally powered by AI.

Creators would love to have a "smart FAQ," but they don't know how to set it up or maintain it.

→ Questions are repetitive

They are open to AI answering questions, but want control and the ability to edit, review, or view the history.

→ They see value in automation (with reservations).

✸ INSIGHT: Feeding the AI and ways to validate the answers.

We analyzed comments within course environments to identify real patterns of questions. Most questions concentrated around:

Understanding the lesson content

Course navigation

Technical issues

With strong recurrence across students.

Behavioral analysis

Over 80% of the questions

"I didn't understand how to apply this technique. Can you give another example?"

- Questions about the lesson content

“Which module is the most important to start applying?”

- Questions about the course progress

“How do I download the class materials? Where can I find the supporting files?”

- Technical questions

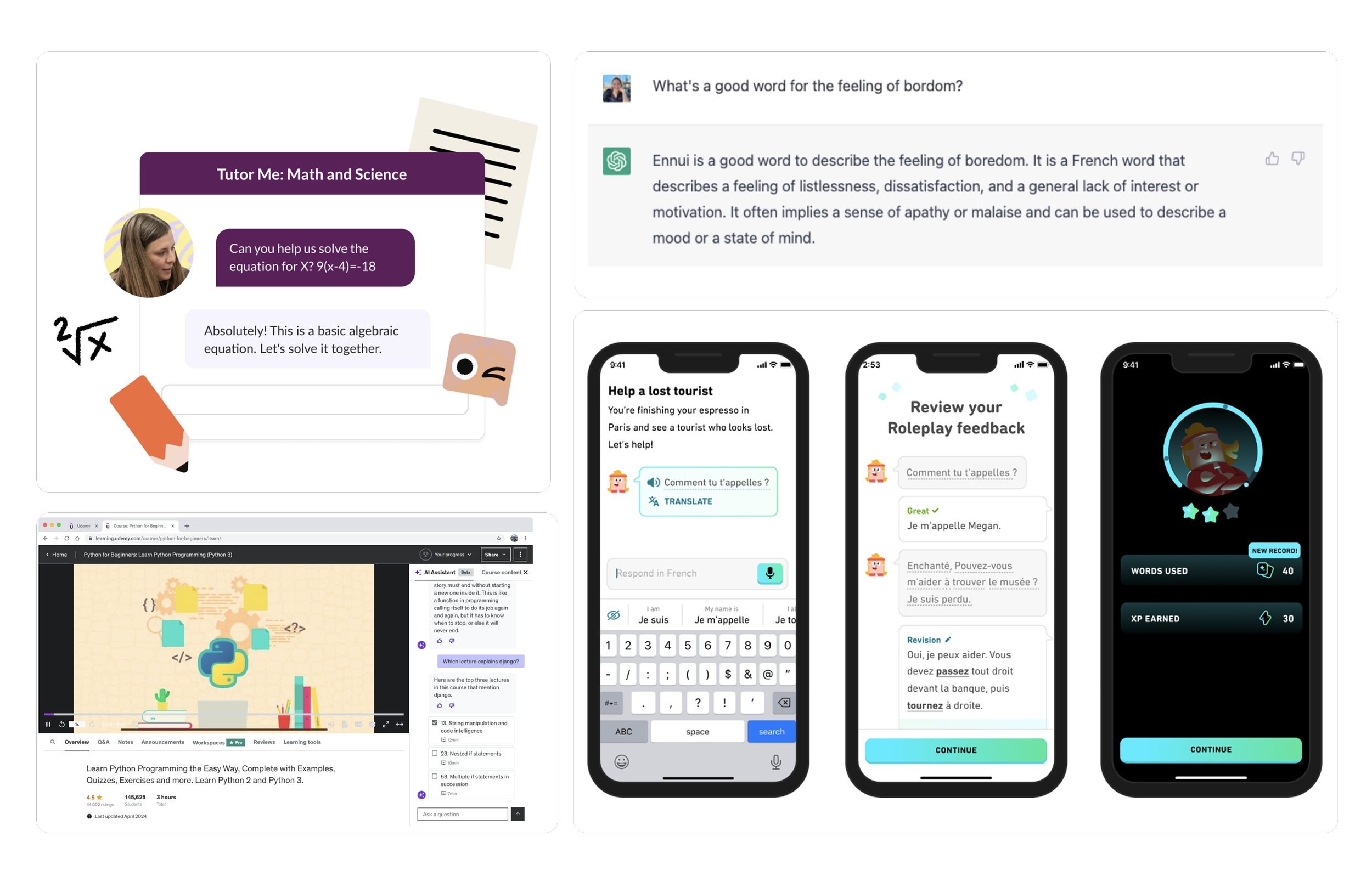

benchmark

We analyzed how AI is being used across educational and content platforms.

More mature players integrate AI directly into the learning experience, while others still treat it as an external or experimental support layer.

This reinforced the opportunity to differentiate through contextual integration.

✸ Leaders in the use of AI: Khan and Duolingo

They focus on structured education and proprietary content, with AI integrated from the start.

✸ Partial adoption phase: Udemy and Coursera

Testing AI as a support assistant. Feeding the AI and methods for validating responses.

✸ Ainda não possuem: Cademí, Circle e Kajabi

PROBLEM REFINEMENT

How might we move from manual support to a system that learns, scales, and provides contextual responses 24/7?

Product strategy as growth levers

We reframed support as a set of product levers, each designed to drive measurable impact across efficiency, experience, and growth.

✸

Knowledge structuring → Efficiency at scale

Transform course content (video → transcripts) into an AI-powered knowledge base.

Outcome

Reduces dependency on manual responses and absorbs repetitive questions

Metrics

↓ Support ticket volume

↓ Cost per support interaction

↑ Self-service resolution rate

✸

Creator-in-the-loop → Trust & adoption

Provide visibility and control for creators to monitor and intervene when needed.

Outcome

Builds trust in AI and increases willingness to adopt the solution

Metrics

↑ Creator adoption rate

↑ Feature activation per course

↓ Manual intervention over time

✸

In-context support → Engagement & continuity

Embed support directly into the lesson, at the moment of doubt.

Outcome

Removes friction, increases usage, and keeps students in the learning flow

Metrics

↑ Feature adoption rate

↑ Interactions per student

↑ Course completion rate

↓ In-course drop-off

✸

Compounding knowledge system

Support evolves into a system that improves with usage.

Impact

Knowledge accumulates over time.

Student pain points become visible and actionable.

Support scales without proportional cost increase.

✸

Feedback-driven system → Quality over time

Use user feedback to continuously improve response quality.

Outcome

Creates a system that learns and improves without additional operational cost

Metrics

↑ Positive feedback rate (👍)

↓ Repeated questions

↑ Perceived answer accuracy

↑ Course NPS

✸

Business impact

The solution drives measurable outcomes at a business level.

Impact

↑ Retention

↓ Churn

↑ Lifetime value

solution

An intelligent assistant for the Creator and a mentor for the student.

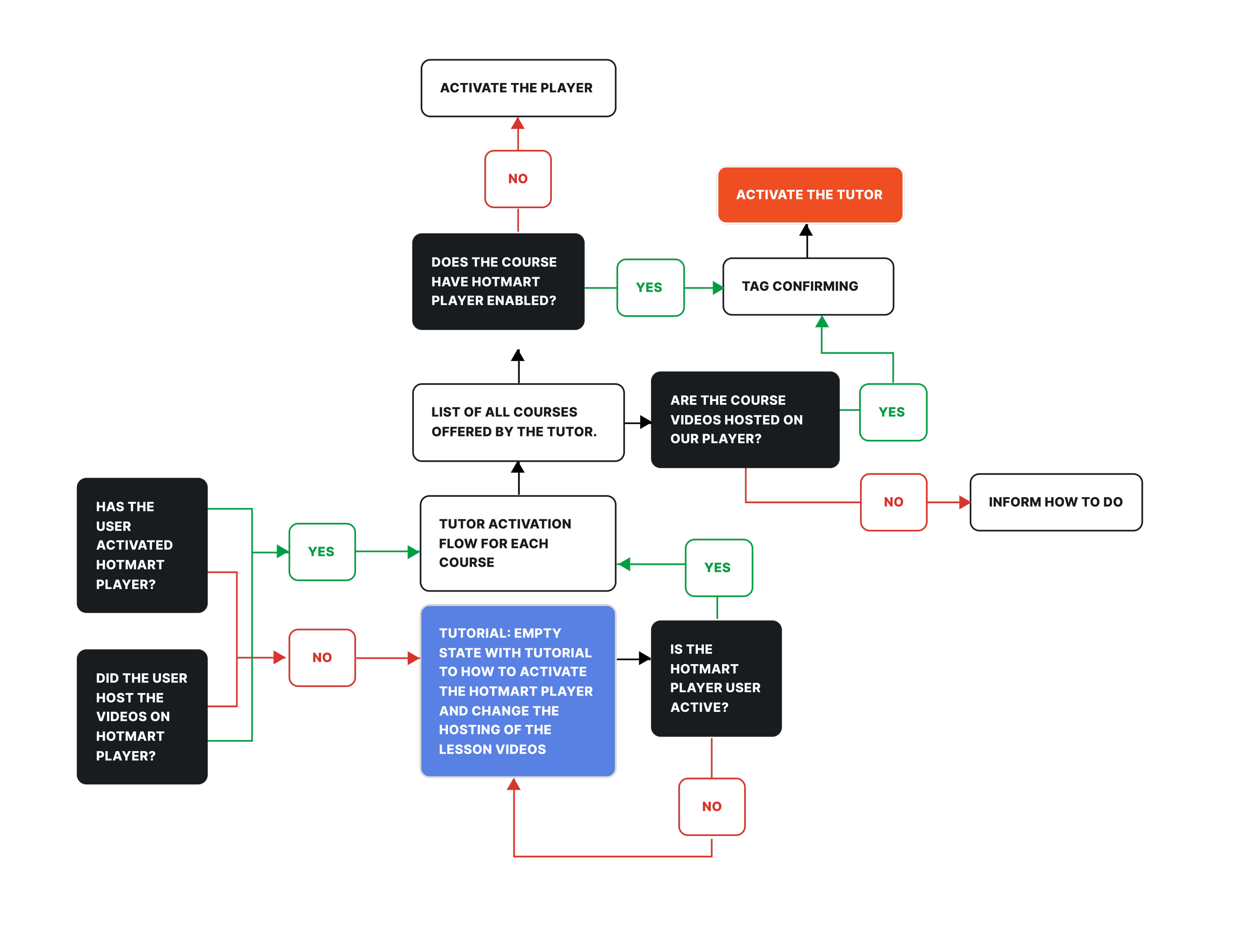

First, we needed to understand the flow that enables our AI to function, specifically, the use of Hotmart’s video player to generate text transcripts that feed the AI. From there, we mapped key touchpoints and created scenarios for users who already use the Hotmart player and those who don’t.

We also defined how to educate users on activating this initial step, which is essential to unlock access to our solution.

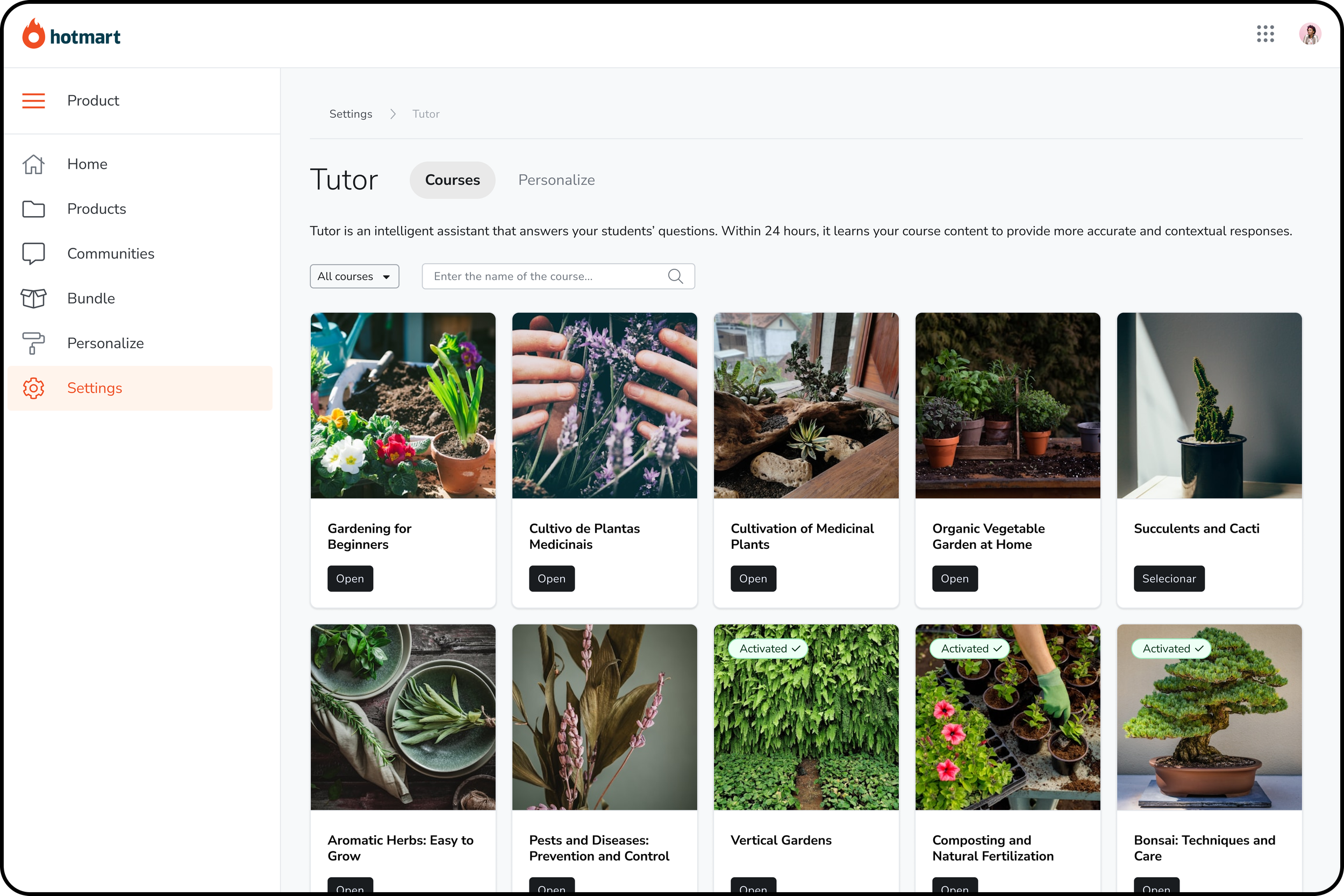

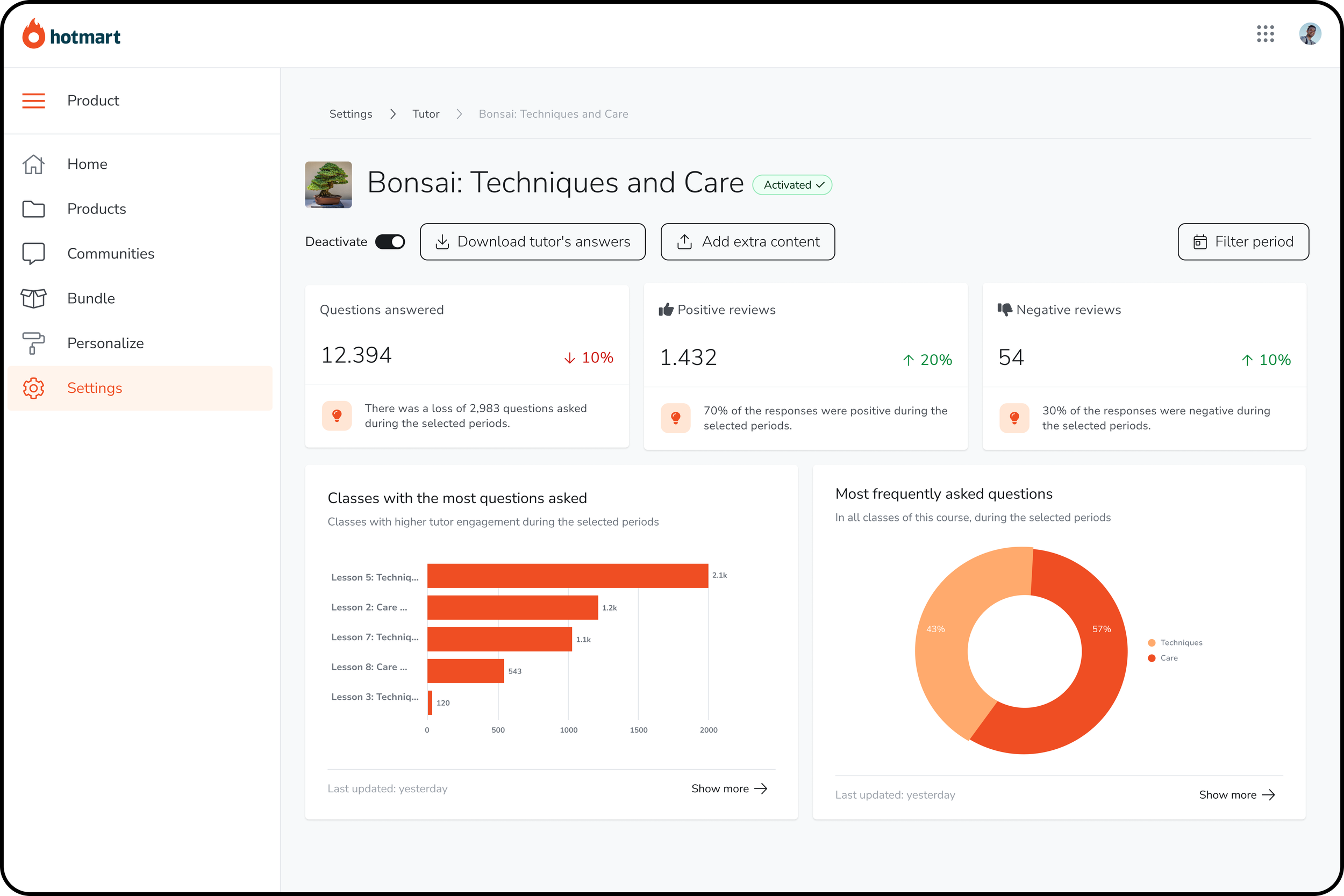

Course listing screen for Tutor activation.

In this configuration section, creators can manage courses in relation to the Tutor and add extra materials to enhance the AI’s understanding of the lesson content.

Within each course, there is a dedicated section featuring an analytics dashboard that provides insights to guide creators in developing new content.

Additionally, the Tutor can be activated or deactivated at any time.

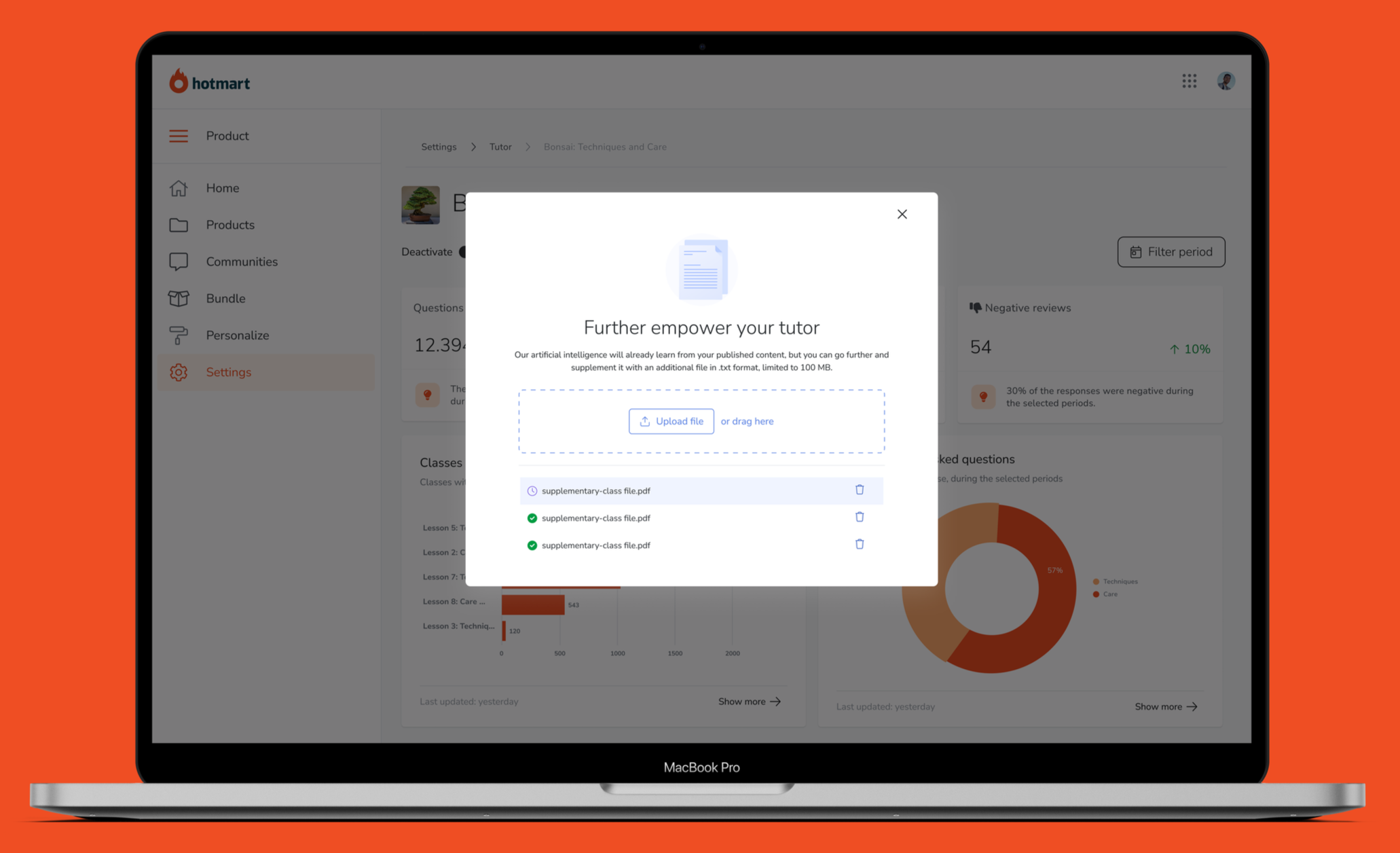

FURTHER EMPOWER YOUR TUTOR

FAQs, additional materials, platform-related technical topics, and content based on the most frequent student questions.

This makes the Tutor’s knowledge base easily accessible, increasing its ability to provide effective responses.

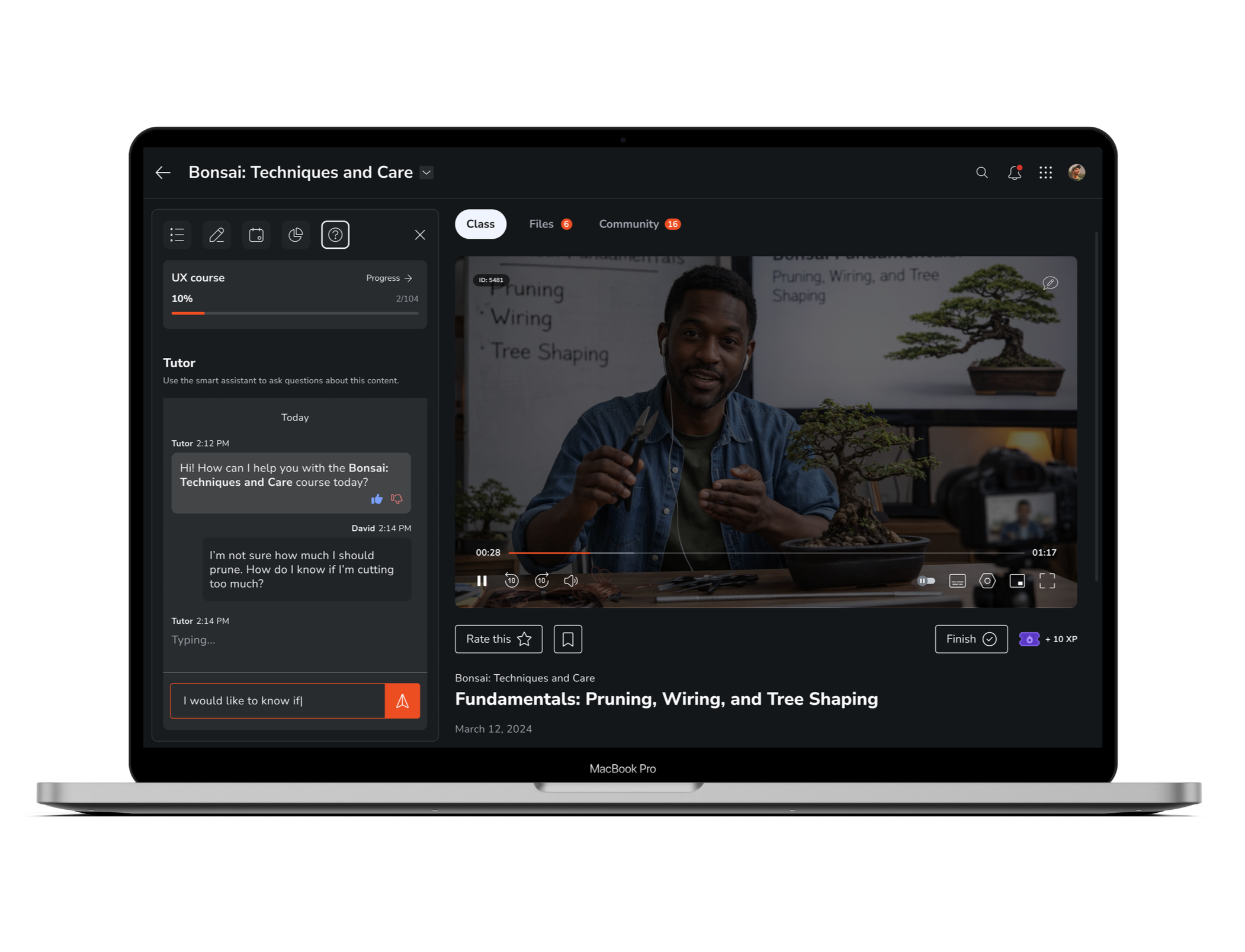

Learn at your pace, get answers instantly.

The autonomy students needed to complete the course.

validation

We designed a progressive rollout plan, starting with a smaller, controlled user base. Over three waves, we gradually expanded until reaching 100% of users.

Throughout this process, we continuously validated and iterated on the solution, allowing us to deliver a more consistent product with fewer risks before full release.

3-wave rollout plan

First wave

100 users

In this wave, we worked with a smaller user base, making the rollout more controllable. We gathered qualitative feedback through interviews and support tickets.

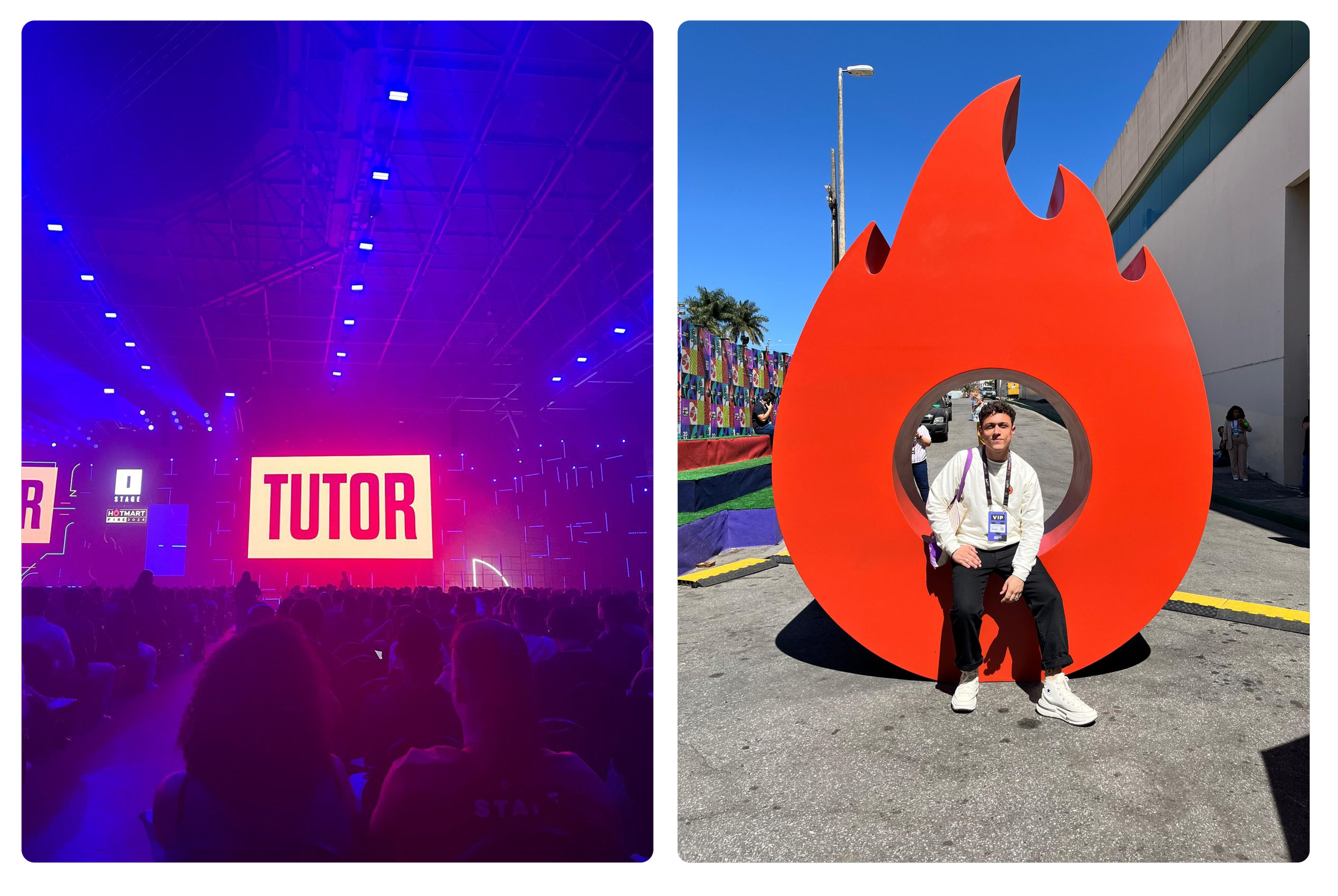

fire festival

The Fire Festival by Hotmart is one of the largest marketing and business events in Latin America, bringing together entrepreneurs, creators, and experts to share strategies, trends, and growth in the digital market. On the main stage, during the opening, we officially launched this feature, which further boosted demand.

Second wave

1k users

In this wave, new users benefited from the learning of early adopters, which significantly reduced the learning curve. Additionally, we were able to validate the solution through social proof.

Third wave

100% of the base

In the final wave, there was already organic demand for the feature, along with users returning to the platform driven solely by this new capability.

✸

for creators

Effort reduction with support from AI.

Increased student confidence in creators support.

Scale of support with quality.

metrics & impact

✸

for students

Questions answered in real time.

Reduced frustration and drop-off.

A smoother learning experience.

✸

Results

Completion rate +25%

Average NPS +2 points

Questions resolved without human intervention 85%

Reduction in manual tickets -50%

Time spent on the platform +28%

Cost per interaction -40%

Technology doesn’t replace the teacher. It amplifies their ability to teach.

CONCLUSION

Translate frustration into product opportunities.

Collaborate with AI and data without losing the human focus.

Iterate quickly based on real user feedback.

Learnings

NEXT STEPS

Invest in stronger AI onboarding for creators.

Expand to mobile (app versions).

Improve tone of voice and conversational design.